Is Veterans Affairs Protecting Vets Against Surveillance Capitalism?

As the Department of Veterans Affairs moves closer to a privatized model, veterans are wondering how the agency is protecting veterans against surveillance capitalism.

Under the guise of benevolence, Big Data giants like Apple, Facebook, and Google are courting VA executives for expansive reach into veterans’ health information and other forms of informatics – – areas previously protected out of privacy concerns.

On its own, many of you may think this is fine. After all, who does not like using an iPhone or the search capabilities of Google?

If this describes you, then reading the rest of this article is likely a waste of your time. You will likely not be concerned about surveillance capitalism and how companies like Apple and Google extract and leverage residual data for uses that the initial user was unaware.

If you are concerned about how Apple or Google is planning to leverage access to your health information, then keep reading.

So what is surveillance capitalism? And, why am I writing about this on New Years Eve 2019?

I came across the above document on YouTube last night [psst, it’s very interesting so please take some time to watch it.] and was astonished. While I consider myself somewhat well informed about Big Data, I was disappointed to see how the industry appears to be duping the public and Congress without a penalty substantive enough to stop the behavior described in the video.

Born out of the dot com bubble, “surveillance capitalism” is the residual data market Google discovered around 2001-03 to help improve the company’s bottom line after the bubble burst. There, “residual data” was used and is used to create targeted predictive technologies for marketing companies, organizations, and government agencies.

This is basically the very lucrative practice of scraping metadata off apps and other software tools to then sell that metadata on top of selling the device or software.

RELATED: Google Shuts Off Adwords Spending

The metadata gleaned by manufacturers and cell companies from a smartphone is very valuable to marketers and government agencies.

Why does this matter related to the Department of Veterans Affairs?

Ever since the Mar-a-Lago trio meetings first surfaced in 2018, thanks to ProPublica, the public was educated quickly on how private interests including Apple were attempting to court VA executives to use private-sector apps, software, and solutions related to Veterans Health Administration health information.

RELATED: The Shadow Rulers Of The VA

At stake was access to more than 20 years of health information stored within VistA belonging to over 20 million veterans, both dead and alive.

Apple Wins

Jump forward to the end of 2019. Apple succeeded in its push to convince VA to use its apps for a variety of purposes including to allow veterans to download their electronic health records onto an iPhone.

Professor Of Surveillance Capitalism

In the video at the top of this article, Harvard professor Shoshana Zuboff provides a deep look into the world of surveillance capitalism. Professor Zuboff explains why we should all be troubled with how Big Data elites are essentially conning us.

Professor Zuboff says they want our private information, and they boil the proverbial frog slowly by withholding precisely how the data is used and how it is scraped from the data we provide. Once they get caught doing something illegal or unethical, they issue an apology and divert the conversation to distract the public.

RELATED: Welcome To The Age Of Surveillance Capitalism

I bring this up here as VA is now rolling out various apps created by Apple to transmit your health information from a supposed secure server to your iPhone. This process was supposedly done for benevolent purposes.

RELATED: VA Health Records Now On iPhone

But as Zuboff explains, nothing is as it seems.

Take the Android phone.

The Case Of Android

Many Google executives were excited to create a competitor to the iPhone and charge a competitive price. Instead, others familiar with how to provide off residual data decided to move the phone’s marketing in a different direction.

RELATED: Identifying User Behavior With Residual Data In Apps

Rather than create a highly profitable phone like iPhone the company would instead subsidize the Android to make it as cheap as possible or even free.

The reason?

Profits In Residual Data

The company stood to make even more money marketing the residual data gathered by selling the information to vendors who could then help yet other companies sell products to consumers at precisely the right time.

As a parallel, there is the infiltration of Google Chromebooks into every corner of child education despite mounting evidence demonstrating the use of these devices by children is bad for cognitive development.

Ever wonder why your kids’ schools are pushing Google computers and the use of Google apps for education? Is it because Google is benevolent as many school boards have been told? Ever ask what Google is doing with that metadata?

Our local school in Minnesota just mandated the use of Chromebooks by all students this year with limited exceptions.

Making Money On Veterans Genomic Data

You may also recall a story I first exposed here about a company called Flow Health. The company had a concerning plan to profit off the metadata it gleaned from our health information, and VA quickly canceled its agreement with Flow Health after I wrote about it.

The agency allegedly realized the agreement may have legal issues.

RELATED: Flow Health Reveals Sketchy Profit Plan For Veteran Genomic Data

I was the only journalist to write about the problem. And that was one company. How many others slide by, are presently accessing our health information, scraping off the metadata under the guise of “helping veterans” to then profit handsomely by exploiting our records.

What Of Cambridge Analytica?

All this may seem fine to many of you, but it takes a different turn when you look at Facebook and the Cambridge Analytica scandal – – this is an obvious example of surveillance capitalism gone awry.

Facebook engineers published government-funded research into ways the company could use subliminal messaging to influence the behavior of people while offline.

The researchers concluded they not only could do it but that the individuals being manipulated would have no idea.

Enter Cambridge Analytica. While much of the news media focuses on Russia’s involvement in the 2016 election, they seemingly gloss over England’s roll through Cambridge Analytica in the very real and confirmed illegal behavior just across the Atlantic Ocean.

This company was able to brag about how they could not only detect what information people share but also through residual data ascertain the deep dark secret and fears many people struggle with but do not knowingly express.

RELATED: Psychological Profiling IDIEA Sold To Politicians

By gathering data points from various vendors, companies like Cambridge Analytica were able to manipulate millions during the election cycle.

RELATED: How Cambridge Analytica Mined Data For Voter Influence

Google says it can manipulate 10 million voters.

Home DNA Testing Dangers

Recently, the Department of Defense issued a warning against servicemembers using commercial DNA tools like Ancestry and 23andMe because of the risk such data could pose in the wrong hands.

Who would’ve thought such seemingly innocuous objectives could be lumped into this discussion of surveillance capitalism?

The DOD memo read in part:

“Exposing sensitive genetic information to outside parties poses personal and operational risks to Service members,” reads the memo signed by Joseph Kernan, the undersecretary of Defense for intelligence, and James Stewart, the assistant secretary of Defense for manpower.

“These [direct-to-consumer] genetic tests are largely unregulated and could expose personal and genetic information, and potentially create unintended security consequences and increased risk to the joint force and mission,” the memo continues.

The reality is commercial databases are frequently able to hide behind trade secrets to preclude public exposure as to their methods and the data they maintain on us. They can also be sold to whoever is the highest bidder in many instances.

In the wrong hands, DNA information can be weaponized. DOD likely already has the capability of doing so, and their curious warning is likely the result of some military official having a lightbulb moment while watching an Ancestry commercial.

Speaking of which, how is VA protecting veterans’ genomic data from exploitation?

If Big Data And Healthcare Had A Baby?

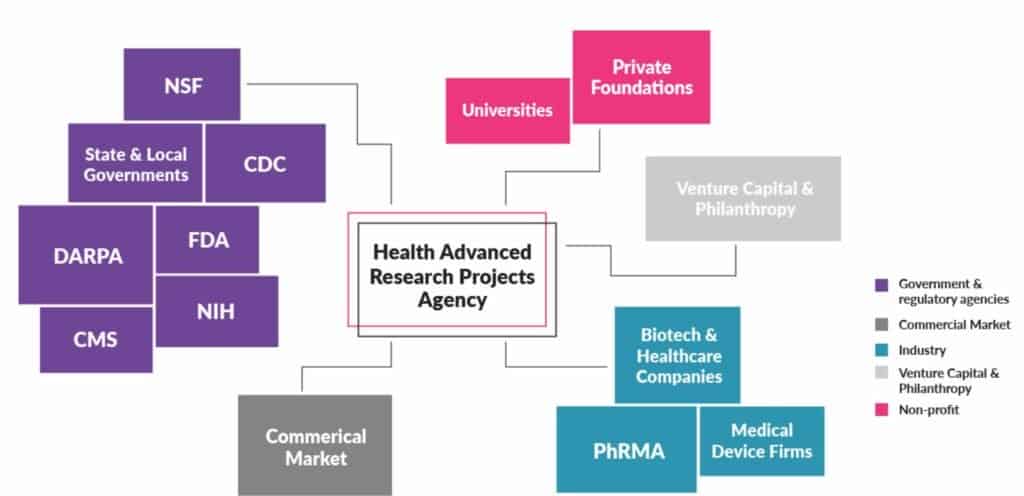

Over the horizon is the newest industry partnerships between Big Data and the Healthcare industry with the potential birthing of yet a another new agency called HARPA (I will get into that in a bit.).

RELATED: Big Data And Public-Private Partnerships In Healthcare And Research

Through HIPAA releases for supposed research, these companies can pull in a very powerful dataset to marketing a variety of pharmaceuticals and other healthcare solutions to you based on behavior online.

RELATED: Pharmaceutical Companies And Their Drugs On Social Media

We already know our new media is highly reliant on funding from pharmaceutical companies resulting in a shameful lack of coverage of problems within that industry.

Can this impact your rights as veterans?

Possibly. And what happens if your information is not properly protected, leaked, hijacked, or otherwise?

The current government position on government privacy violations appears to be they have little obligation or liability. So, they can do what they want with your data.

Proposed HARPA And Red Flag Laws

One group of lobbyists is pushing for President Donald Trump to approve gun rights restrictions based on real-time analysis of datapoints through data gathering from technologies in the home, health information, and wearable devices.

This is the most recent evidence of the danger looming in the merger between surveillance capitalists and the government that is ever willing these days to hand over our health information.

Through the creation of a new agency called HARPA, modeled after DARPA, some gun control advocates seek targetting and profiling of individuals with mental illness using surveillance technology and psychologists to limit access to weapons.

A judge and jury would be replaced by your VA shrink and an Apple Watch.

According to the Washington Post, companies providing services and devices supporting the new initiative would be Google Home, Apple Watches, Fitbit, Amazon Echo, and the usual culprits.

The project is being pushed by an organization supporting advancements in medicine to treat cancer called the Suzanne Wright Foundation. Within its plan SAFE HOME, short for “Stopping Aberrant Fatal Events by Helping Overcome Mental Extremes” the organization called for using Artificial Intelligence to monitor fluctuations in “mental extremes” to curb gun violence.

The Trump Administration is reportedly considering the new agency as of the reporting about it back in August after the El Paso and Dayton shootings.

While some folks think this level of Orwellian fusion within government and its public-private partnerships is a great idea, it certainly poses a great deal of risk without basis in research. It also runs the risk of profiling those with mental illness without due process or evidence that such individuals are more dangerous than others.

DOD Refutes Predictive Tech

The publication Reason highlighted a DOD study from 2012 that refutes the premise of the HARPA plan called SAFE HOME that is important to bring up here.

A 2012 study that the Defense Department commissioned after the 2009 mass shooting at Fort Hood in Texas explains the significance of that fact in an appendix titled “Prediction: Why It Won’t Work.” The appendix observes that “low-base-rate events with high consequence pose a management challenge.” In the case of “targeted violence,” for example, “there may be pre-existing behavior markers that are specifiable.” But “while such markers may be sensitive, they are of low specificity and thus carry the baggage of an unavoidable false alarm rate, which limits feasibility of prediction-intervention strategies.” In other words, even if certain “red flags” are common among mass shooters, almost none of the people who display those signs are bent on murderous violence.

The Defense Department report illustrates the problem with a hypothetical example. “Suppose we actually had a behavioral or biological screening test to identify those who are capable of targeted violent behavior with moderately high accuracy,” the report says. If “a population of 10,000 military personnel…includes ten individuals with extreme violent tendencies, capable of executing an event such as that which occurred at Ft. Hood,” a test that correctly identified eight of those 10 dangerous people would wrongly implicate “1,598 personnel who do not have these violent tendencies.”

That scenario assumes a predictive test that does not actually exist. “We cannot overemphasize that there is no scientific basis for a screening instrument to test for future targeted violent behavior that is anywhere close to being as accurate as the hypothetical example above,” the report says.

In the rush to embrace artificial intelligence, we may be applying these software solutions in inappropriate and dangerous ways based on hysteria rather than science. But we all recognize at this point the incentive for the companies to play along all have roots not in benevolence but in surveillance capitalism.

Ironically, the SAFE HOME model of surveillance using commercial solutions gained momentum with Trump after the suspicious Dayton and El Paso shootings this summer.

Predicting Violence ‘Doesn’t Make Sense’

The Reason author followed up the DOD report excerpt:

“According to a copy of the SAFEHOME proposal,” the Post says, “all subjects involved [in the research] would be volunteers,” and “great care would be taken to ‘protect each individual’s privacy,'” while “‘profiling of any kind must be avoided.'” It is hard to see how profiling can be avoided, since the whole premise of the project is that people who fit a certain psychiatric profile are especially prone to mass murder.

Once the research has been completed, of course, the resulting information would be pretty useless if it could be deployed only against volunteers. So how would that work? Would people with certain psychiatric diagnoses be legally required to carry electronic monitors aimed at detecting “small changes that might foretell violence”? How could such a requirement be reconciled with due process or the Fourth Amendment?

Maybe the requirement would be limited to people who pose an especially high risk of violence. But how would they be identified? Since mental health specialists are notoriously bad at predicting violence, SAFEHOME would have to develop two kinds of tests: one that identifies people who are prone to violence and one that predicts when those people are about to commit a crime. “I would love if some new technology suddenly came along that would help us identify violent risk,” Marisa Randazzo, former chief research psychologist for the U.S. Secret Service, told the Post, “but there’s so many things about this idea of predicting violence that [don’t] make sense.”

So, on the back of hysteria, we have a proposal to fully infiltrate homes using surveillance technology for the purpose of using that technology to curtail an individual’s rights.

Dangers Of Technocracy

That would be the final nail in the coffin as we move into the technocratic age where Americans would be ruled by the technocratic elite President Eisenhower warned us against as the second half of his farewell address.

Does that same lack of liability apply to government contractors providing these services? What if there’s a breach?

Bringing this back to my question about Apple, our health information, privacy, and surveillance capitalism.

In the surveillance capitalist mindset, is the Department of Veterans Affairs anticipating these problems and protecting us against the usurpation of our right to privacy? Do we really own our medical data?

This is not a rhetorical question.

I would love to know what you think about privacy and the use of partnerships with Big Data for the solutions that seem to be good on the surface while little is known about how the residual data will be used, gathered, and stored.

Worrying about records . We should worry more what they write on those records . Falsifying Veterans files and then releasing them. Gaslighting the Veteran to cover up their crimes that they commit . But when you have a scumbag puppet like VA sec Wilkie there is nothing more to expect for Veterans but the worse .Everythings normal at the V.A ?

First: veterans have no civil rights.

Secondly: when did veterans have privacy, (HIPPA), at the VA!

*When* will we see ALL veterans receive proper medical care?

*When* will we see ALL veterans receive the benefits we were promised?

*When* will law enforcement agencies start holding the VA reprobates responsible for the harm they’ve caused us?

Good Lord where did that doomsday excert come from? Me and my house will be going home to the Lord Jesus Christ no matter what the case, we may all want to focus on that for help. VA isn’t going to ever be a veterans friend on this earth.

NormaRae, let me tell you a short story. I read where Shelly Fitch tells Lem he needs to retrieve his own information and handle his own work for his claim and I read you NormaRae that the VA isn’t going to ever be a veteran’s friend on this Earth. NormaRae, the person or the agency doing the job inadherence to the laws, to honorable ethics, and to honorable accountability does not make the person a friend to whom they are doing the job for. Today, I witness people who believe if one is doing what they are supposed to do to begin with somehow favorability is happening. Or they believe because they doing a job by the laws that actually does in the end assist others somehow to them this is wrong. Many people today are really misconstruing the actions. Say for example, veteran applies for whatever, well, some VA employees almost believe that approving the claim is meaning some type of friendship exists. No, this is not the case; but, to the VA to them it is like brushing backs or doing favors. Delivery to others is not about being friends. I have someone coming by and I will finish my statement about the VA. The past and the today. Friendship is different from doing the job the way it is supposed to be done with humanness, accountability, and good ethics. Will return.

I have come back to finish. I will illustate with real examples. For example, a few years ago, I had called the Regional Office about Chapter 31 Vocational rehabilitation to ask some questions. Well, I did not have any idea where some of the VA employees were coming from whatsoever other than to deny. Well, during the conversation the VA regional office Voc Rehab employee and who was a veteran made the comment had something to do in the context of liking me or friend or being nice to me. I can’t remember the exact wording. Anyway, I perceived this as being very weird. In other words, what in the hell does this have to do with the issue? I remember more of my exact words. I said to the VOC Rehab employee,”I am not wanting you to like me or be my friend.” Ben, this was crazy.

Ben, the VA employees are almost thinking or have adopted a new modality that honoring veterans’ needs or claims that this is somehow about being nice to the veteran or being friend. What in the hell does approving a claim have to do with the price of China? What in the hell does doing a legal, ethical, and accountable job have to do with latching into a niceness or friendship on demand between the two people? Ben, one can do his or her job inaccordance with the policies, regulations, and laws without having to be a friend. Both are two different issues. One can be totally detached from the person with disliking the other person at the same time and still deliver to the person to whom they dislike. Some in the VA and some people who do not focus on the facts or the objectivity or the current credible substance wind up wallowing due to believing that everyone is always about brushing each other’s backs or good old boy club or someone is wanting favorability. This is far from the truth. Another example, an employee who works for a company who drives up to the company door and parks right in front of the camera to clock into the job from the phone or the laptop at the start of the day. Now from the way I look at this action taken, this is an efficient decision. Here we have accountability and efficiency all at once. He or she is accountable to the company of when the person starts the day plus it saves time. The person can get on with the employment tasks of the day. Well, now new leadership comes about and stops this process. Well, here again it boils down to favoritism. This is the problem. I do not give a damn what whomever says about equality. Equality will never exist. Always will be competitiveness. Human nature. There are people who do not act with good measures. It is what it is. And for the leadership to penalize people because they are worried about favorability is total bullshit. Leadership will never be able to make everyone happy. Get the damn personality out of all issues. See this is what liberalism, socialism, Communism is all subjectivity. Using subjectivity, people can spin the hell out of everything and never get anywhere. Plus, all people are still not happy. Getting back to the VA, how about the veterans who are too sick to deal with the claims? Or stand up? Or pursue the claims process themselves? Well, everyone back 24 years ago, I was one of those vets who was in that type of state. So Ben if my situation had not been handled by others, I might not be living today. So Ben when the VA makes it to where the veterans have to do their everything on their own, then why are the VA employees receiving a damn paycheck? They are getting paid everyday to serve the veterans. This is their job description. This is not about being friends with veteran patients or being nice to a vet. This is why we have a Constitution which is the law of the land. This is why we have ethics codes and CFR. This is why we have medical boards who grant licensure to medical professionals. This is about the Department of Veterans Affairs being paid by the American taxpayers of this country to detach and to work accordingly to their job descriptions to deliver services. This is not about the VA being a friend to anyone. This is how many confuse the purpose for why they exist. And, when veterans are denied and delayed, the federal government is serving no one but themselves. ???

My typo….illustrate. Oops.

Yep

OldMarine, it is time for the return of Jesus Christ for him to walk on this Earth again. Second Coming of Christ is near. All the signs are in the Book of Revelations in the Bible.

I follow the second coming, the third coming after Christ and all of the comings before Christ as when he said, “If you knew Abraham or Moses you’d know Me. For we are One.

Silly to believe God loves all men but would ignore more than half the population of the world and doom them to some place called hell. Hell is a state of being, not a place. It is how we react or are reacted to by others. Our reaction to adverse VA employees becomes our hell if we let it.

Sorry, you missed and don’t see the evolution of a “New World Order.” Christ started one on a White Horse with a sword following his words Christianity was spread by the sword. What was his temptation? Was Mohammed tempted and therefore rode the Black horse to war and conquest more directly?

Lot of deep theological questions to explore. If you look around you’ll find answers. But you’ll have to get out of your religious prejudices to do so.

“An illusion it will be, so large, so vast it will escape their perception.

Those who will see it will be thought of as insane. We will create separate fronts to prevent them from seeing the connection between us. We will behave as if we are not connected to keep the illusion alive. Our goal will be accomplished one drop at a time so as to never bring suspicion upon ourselves. This will also prevent them from seeing the changes as they occur.

“We will always stand above the relative field of their experience for we know the secrets of the absolute. We will work together always and will remain bound by blood and secrecy. Death will come to he who speaks.

“We will keep their lifespan short and their minds weak while pretending to do the opposite. We will use our knowledge of science and technology in subtle ways so they will never see what is happening. We will use soft metals, aging accelerators and sedatives in food and water, also in the air. They will be blanketed by poisons everywhere they turn.

The soft metals will cause them to lose their minds. We will promise to find a cure from our many fronts, yet we will feed them more poison. The poisons will be absorbed through their skin and mouths, they will destroy their minds and reproductive systems. From all this, their children will be born dead, and we will conceal this information.

The poisons will be hidden in everything that surrounds them, in what they drink, eat, breathe and wear. We must be ingenious in dispensing the poisons for they can see far. We will teach them that the poisons are good, with fun images and musical tones. Those they look up to will help. We will enlist them to push our poisons.

“They will see our products being used in film and will grow accustomed to them and will never know their true effect. When they give birth we will inject poisons into the blood of their children and convince them its for their help. We will start early on, when their minds are young, we will target their children with what children love most, sweet things.

When their teeth decay we will fill them with metals that will kill their mind and steal their future. When their ability to learn has been affected, we will create medicine that will make them sicker and cause other diseases for which we will create yet more medicine. We will render them docile and weak before us by our power. They will grow depressed, slow and obese, and when they come to us for help, we will give them more poison.

“We will focus their attention toward money and material goods so they many never connect with their inner self. We will distract them with fornication, external pleasures and games so they may never be one with the oneness of it all. Their minds will belong to us and they will do as we say. If they refuse we shall find ways to implement mind-altering technology into their lives.

We will use fear as our weapon. We will establish their governments and establish opposites within. We will own both sides. We will always hide our objective but carry out our plan. They will perform the labor for us and we shall prosper from their toil.

“Our families will never mix with theirs. Our blood must be pure always, for it is the way. We will make them kill each other when it suits us. We will keep them separated from the oneness by dogma and religion. We will control all aspects of their lives and tell them what to think and how. We will guide them kindly and gently letting them think they are guiding themselves.

We will foment animosity between them through our factions. When a light shall shine among them, we shall extinguish it by ridicule, or death, whichever suits us best. We will make them rip each other’s hearts apart and kill their own children. We will accomplish this by using hate as our ally, anger as our friend. The hate will blind them totally, and never shall they see that from their conflicts we emerge as their rulers.

They will be busy killing each other. They will bathe in their own blood and kill their neighbors for as long as we see fit.

“We will benefit greatly from this, for they will not see us, for they cannot see us. We will continue to prosper from their wars and their deaths. We shall repeat this over and over until our ultimate goal is accomplished. We will continue to make them live in fear and anger though images and sounds. We will use all the tools we have to accomplish this. The tools will be provided by their labor. We will make them hate themselves and their neighbors.

“We will always hide the divine truth from them, that we are all one. This they must never know! They must never know that color is an illusion, they must always think they are not equal. Drop by drop, drop by drop we will advance our goal. We will take over their land, resources and wealth to exercise total control over them. We will deceive them into accepting laws that will steal the little freedom they will have. We will establish a money system that will imprison them forever, keeping them and their children in debt.

“When they shall ban together, we shall accuse them of crimes and present a different story to the world for we shall own all the media. We will use our media to control the flow of information and their sentiment in our favor. When they shall rise up against us we will crush them like insects, for they are less than that. They will be helpless to do anything for they will have no weapons.

“We will recruit some of their own to carry out our plans, we will promise them eternal life, but eternal life they will never have for they are not of us. The recruits will be called “initiates” and will be indoctrinated to believe false rites of passage to higher realms. Members of these groups will think they are one with us never knowing the truth.

They must never learn this truth for they will turn against us. For their work they will be rewarded with earthly things and great titles, but never will they become immortal and join us, never will they receive the light and travel the stars. They will never reach the higher realms, for the killing of their own kind will prevent passage to the realm of enlightenment. This they will never know.

The truth will be hidden in their face, so close they will not be able to focus on it until its too late. Oh yes, so grand the illusion of freedom will be, that they will never know they are our slaves.

“When all is in place, the reality we will have created for them will own them. This reality will be their prison. They will live in self-delusion. When our goal is accomplished a new era of domination will begin. Their minds will be bound by their beliefs, the beliefs we have established from time immemorial.

“But if they ever find out they are our equal, we shall perish then. THIS THEY MUST NEVER KNOW. If they ever find out that together they can vanquish us, they will take action. They must never, ever find out what we have done, for if they do, we shall have no place to run, for it will be easy to see who we are once the veil has fallen. Our actions will have revealed who we are and they will hunt us down and no person shall give us shelter.

“This is the secret covenant by which we shall live the rest of our present and future lives, for this reality will transcend many generations and life spans. This covenant is sealed by blood, our blood. We, the ones who from heaven to earth came.”

“This covenant must NEVER, EVER be known to exist.

It must NEVER, EVER be written or spoken of for if it is, the consciousness it will spawn will release the fury of the PRIME CREATOR upon us and we shall be cast to the depths from whence we came and remain there until the end time of infinity itself.”

Very well written. Who are you quoting?

Nicely written and well researched. Slowly but surely American citizens are losing many Constitutional freedoms in the name of science. The corporations use their platforms to design algorithms to advance their agenda in insidious ways to the unsuspecting public on a daily basis. Technology is inevitable and inexorable— as every generation ( sooner or later ) will have to draw a line in the sand.

For years some arms within the Government has surveilled universities, and other institutions of higher learning for the best and the brightest to be recruited into the covert chambers of the Government. There they have developed methods and means on how to manipulate the masses and or eliminate them if they present a threat. Russia and the KGB as well as Chinas system of classifying their people as to how good they fit into their communist culture are not doing anything so foreign from what our own Government does. Most all Americans are on some sort of electronic tether in one way or another

Off topic a little. Yesterday I received requests for medical information from the DVA that is in my file. Another delay in the “Delay, Deny, Wait Until They Die” DVA modus operandi. My BVA remand hit the 29 month mark December 20. It is apparent the DRO isn’t going to make the 10 – 29 month target date for a decision or Statement of The Case.

The DVA requires all progress notes to pay the bills for any private care. They have them already. This request is clearly a delay tactic or the documents are in Ben’s 5 mile high stack of un-scanned documents.

Lem – If you believe that the VA is deliberately obstructing your claim, you can ask your state representative to inquire as to its status (I assume you’re talking about a disability claim). Most representatives have someone on their staff who deals specifically with veterans and veterans’ issues. While your representative can’t influence the outcome of your claim, they can make a status inquiry. This kind of inquiry is known within the VA as a “Congressional Inquiry” or a “Congressional”, for short. Having seen firsthand how these are handled, I know that the VA doesn’t take them lightly. There are deadlines for responding to Congressionals and they don’t get swept under the rug the way your claim might be. Since the response(s) go straight to your representative, the VA is not likely to obfuscate. You probably already know that it is not the VA’s responsibility to cull all necessary information that might support your claim from your medical records. It is yours. It seems that you’ve already provided it, though. Best of luck.

Been there and done that. Congresswoman just parroted the DVA response without checking the record I reported to verify the facts in her reply. My claim is 3 years old, NOD 2 years old on January 8. My BVA Remand is 42 months old.

I’m using Godsey v Wilkie 17-4361 as a template to send a Petition for a Writ of Mandamus to the Court of Appeals for Veterans Claims on January 9.

These letters, particularly the one that said, “We received your NOD on December 30, 2019, is clearly an attempt to use the old, you withdraw your claim to close the January 8, 2018 NOD of the November 1, 2017 Claim as was done once earlier on the eBenefits site.

Oh, and I still can’t get copies of my own medical files locally, or get health care.

I can’t, we can’t, not allowed to take electronic devices in the court house or record in the court house, in court rooms or judges chambers, the police station or other places the masters and corrupt want their “safe spaces” to do and say whatever they want to us and we have no defense or records of it or anything else. Includes hospitals with their signs up too.

T, but yet they will record a person and doctor the info to reflect the video and audio to how they want it to be. They too try to create false situations that do not exist. In my opinion, the law enforcement is starting to get a bad wrap. Because they themselves are creating hoaxes. Though, President Trump loves all law enforcement.

One great article, videos and info Ben.

Epstein is a scum-bag and said “Democracy”, they never say “Republic.” Both political parties are putting on one fine show up there while allowing Silicone Valley types create monopolies and more of a totalitarian police state. No difference at all between hackers, the government just opening every American’s files to the Mid-East to and including their buddies Red China, et al.

Zuboff. ‘They’ wait until the noose is around our necks then write their books, make their money, and play their “games.” No-one will ask questions or dare mention some issues? Ha. We see what happens when one questions or wants some real information. Or why and how we become enemies of the state, the VA, or any of the collective or enemy cliques. Even enemies and hated by VSOs and other veterans plus the public.

It’s too late for America. I’d suggest the sheep and others do some research on the countless other videos about “Social Credits” etc. We already have massive eyes all over us, no privacy, facial recognition being used, red-flag laws, predictive health care, blah blah, etc. Have fun.

“https://www.newstarget.com/2019-12-03-china-ai-mass-surveillance-cultural-genocide.html”

“https://www.youtube.com/watch?v=lH2gMNrUuEY”

If 5G really safe? Here it comes.

Ya know they are putting “Blue Tooth” on everything including furnace filters even? Where’s it going to stop?

I got some stories about what Googlet did to me when “terminating” my evil Utube channels then popping up with all kinds of other info, unrelated emails, phone numbers etc, that I had to verify to get some stuff back. Then they give me a page with my real name and way too much info in my profile. Nice huh.

Welcome to Red China, Gaza Strip, USSR living and that “open air prison camp” I’ve been calling America for at least twenty five years. Laugh at me now.

G-day Ben. Take care.

Great write up Ben. One point… people can come out the other side when it comes to mental illness or mental health issues after proper treatment. Ben a fact.

Red flags would target history and then would be discriminatory with violation of people’s rights. Ben, I see people causing many problems and shootings who have never been diagnosed with a mental illness. So how about this? I wished the hell they would get away from all of this labeling because they are destroying lives and penalizing people for no reason.

Put it like this the VA has always been a prick even 24 years ago. However, in the recent years, they have quadrupled with their prick attitudes. The VA has never been nice to me at all. That is why the statement made by the VA Regional Office Voc Rehab employee to me in the recent years was so bafflingly. I really do not care what the VA thinks about me. If a VA physician acts with compassion, the compassion is all wolves wearing sheepskin clothing in my opinion. Ben and all, Have a wonderful New Year. ???

Benjamin, I absolutely agree with you on your statements about the Orwellian fusion in govt with the private and public sector. I agree with your statement on profiling too. Ben, you are correct. Ben, I do not believe you with your intuitiveness are ever wrong. Most of the time in the articles that you write about Ben I agree with you. As for the profiling this is and has been already happening. This has alot to do with why I am still in the position that I am in. Ben, I am getting ready to pursue the VA in regards to Chapter 31 Vocational Rehabilitation. I have organized your Voc Rehab information and digging into it. Ben, age really has little to do with one’s health. Although, the federal government wants it to be. They are about to find out.

Benjamin, all sickening to me.

Many Americans do not even have computers and phones…They are better off without all of this technology. Because Benjamin the technology is just used to harm people. ?

Agree with you T. In my other statements, I had mentioned how the VA did at least try in the past to assist veterans via a more ethical and humane modality. This was 20 years ago. They actually had wonderful veteran success stories with the Chapter 31 Vocational Rehabilitation with the way they used to deliver the services. True. Go back and look during the late nineties and the early 2000’s.

But, today Ben I do not trust the VA to assist me anything. Look at these Democrat run cities and local areas. They are a disaster with the high taxes, low salaries, and disregard for life itself. Hey Ben, do know who is really getting hit with this? A case of paper straws costs over 50 dollars. A case of plastic straws costs less than 30 dollars. Consumer of course. Democrat run areas are taxing plastic everything with 1 percent tax. See how they are taking the money back from what the President is putting into people’s pockets from tax cuts. Though, people do not need a damn straw to drink with. Plus, people just go to areas that are not run by Democrats to purchase plastic everything.

Benjamin, the Democratic party is pathetic.

Silicone Valley (San Fernando Valley) is a pioneering region for the pornography industry. One of the various errors herewithin.